AI is everywhere right now. Tools are deployed. Licenses are purchased. Teams are experimenting daily.

And yet, many organizations are discovering an uncomfortable truth:

AI is saving time in theory, but costing time in practice.

Workday research highlights a clear pattern: while AI helps people finish tasks faster, a meaningful share of those gains gets lost to rework. Teams end up correcting, rewriting, clarifying, and verifying what AI produces. That’s the hidden AI tax.1

Most leaders track gross efficiency (how much time AI saves). The better metric is net value (time saved minus time spent fixing output). When rework is ignored, AI ROI often looks stronger than it actually is.

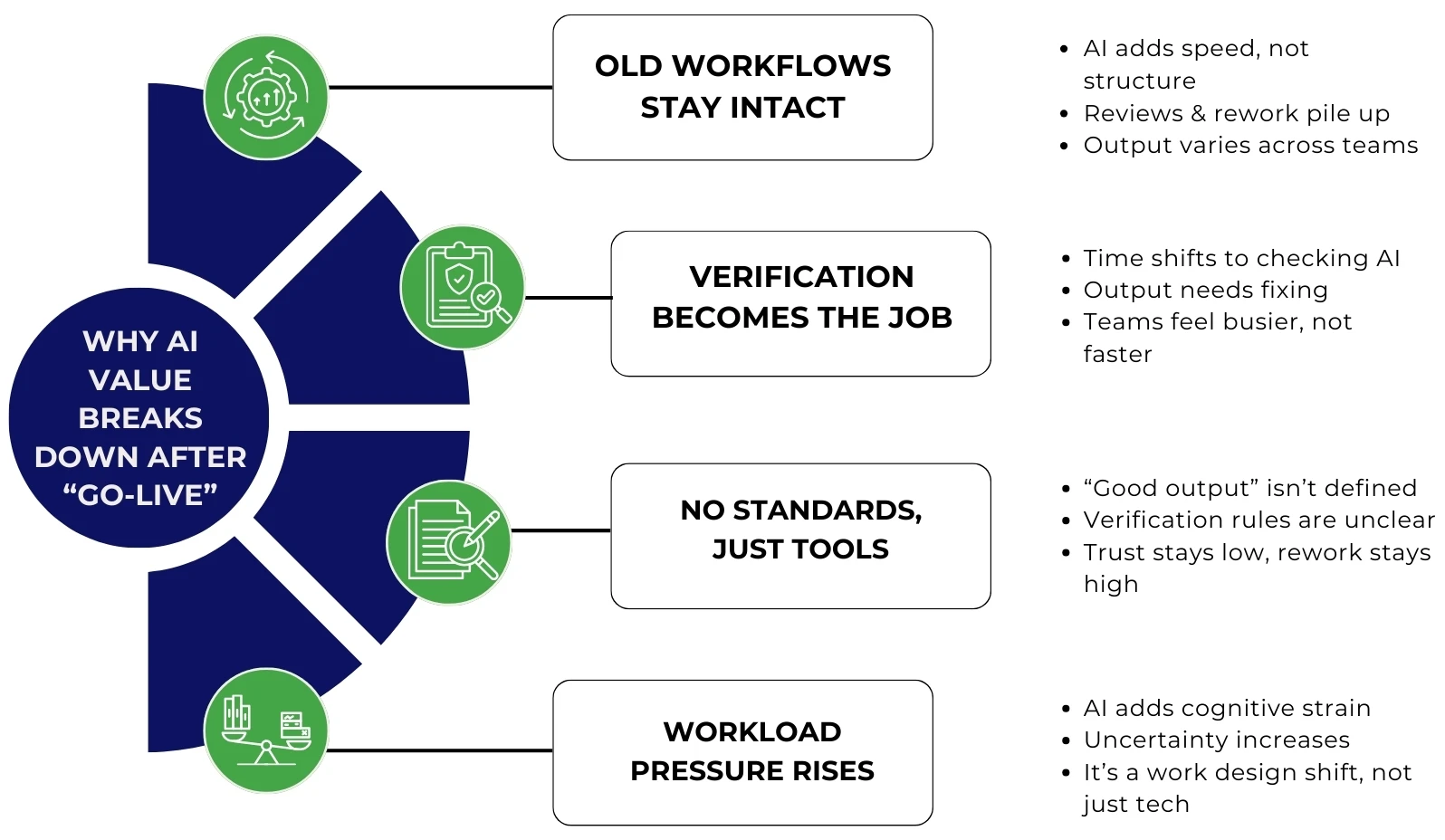

Why AI value breaks down after “go-live.”

Most companies don’t struggle to activate AI. They struggle to turn AI into repeatable performance.

Here’s what usually goes wrong:

1) AI gets layered onto old workflows

If the workflow stays the same, AI simply injects faster drafts into the same process. The quality gap shows up downstream in review cycles, escalations, and rework. AI adoption without changes to roles, skills, and support creates output that varies widely across teams and individuals.

2) “Verification” becomes the new workload

Daily AI users often spend significant time auditing and refining outputs. The workload does not disappear. It moves from writing to editing, and from producing to correcting. This is why some teams feel busier even after adopting AI.

3) Training doesn’t keep pace with adoption

AI usage is high, but shared standards often lag. Teams adopt tools without consistent guidance on:

- what “good” output looks like

- what must be verified and how

- where AI should stop, and human judgment should begin

Without these norms, AI becomes inconsistent, and trust stays low, so rework stays high.

4) Workforce impacts get underweighted

A rushed AI rollout can add pressure, including uncertainty, cognitive load, and a constant need to check outputs. AI is not only a technology shift. It is a work design shift.2

Why the mid-market feels this problem more than enterprises

Enterprises can absorb early AI inefficiency because they already have built-in capabilities: process improvement resources, governance, change enablement, QA controls, platform support, and documentation discipline.

Mid-market companies often do not.

They operate lean, so AI rework hits harder. When your best people spend hours rewriting AI outputs, you are not accelerating. You are redistributing strain.

That is why the gap widens:

- Enterprises convert AI into repeatability.

- Mid-market teams get stuck in revision cycles.

How leaders reduce the AI tax and start compounding value

The shift is simple: stop treating AI like a tool. Start treating it like a capability.

AI produces results when embedded in an operating model with clear quality, ownership, and workflow design.

1) Define “good” before you automate

If your team cannot define “right” (accuracy thresholds, required sources, tone, format, approvals), AI will generate variability. Variability always creates rework.

2) Build human-in-the-loop workflows that protect quality

The goal is not replacement. The goal is consistent handoffs:

Draft → Validate → Approve → Publish

Review should match risk. Some work needs light checks. Some need strict controls.

3) Fix inputs so outputs stop failing

AI cannot compensate for scattered knowledge. If key information lives in inboxes, tribal memory, or inconsistent documentation, AI will keep guessing. Your teams will keep correcting.

4) Measure net value, not time saved

Track outcomes that show whether AI is improving performance:

- rework time

- defect rate

- cycle time

- escalation reduction

- quality and compliance outcomes

If rework is not dropping, ROI is not compounding.

Why Premier NX is the right partner for the mid-market AI journey

Mid-market teams do not need more AI tools. They need a repeatable way to turn AI into reliable execution, so efficiency gains do not get swallowed by revision cycles.

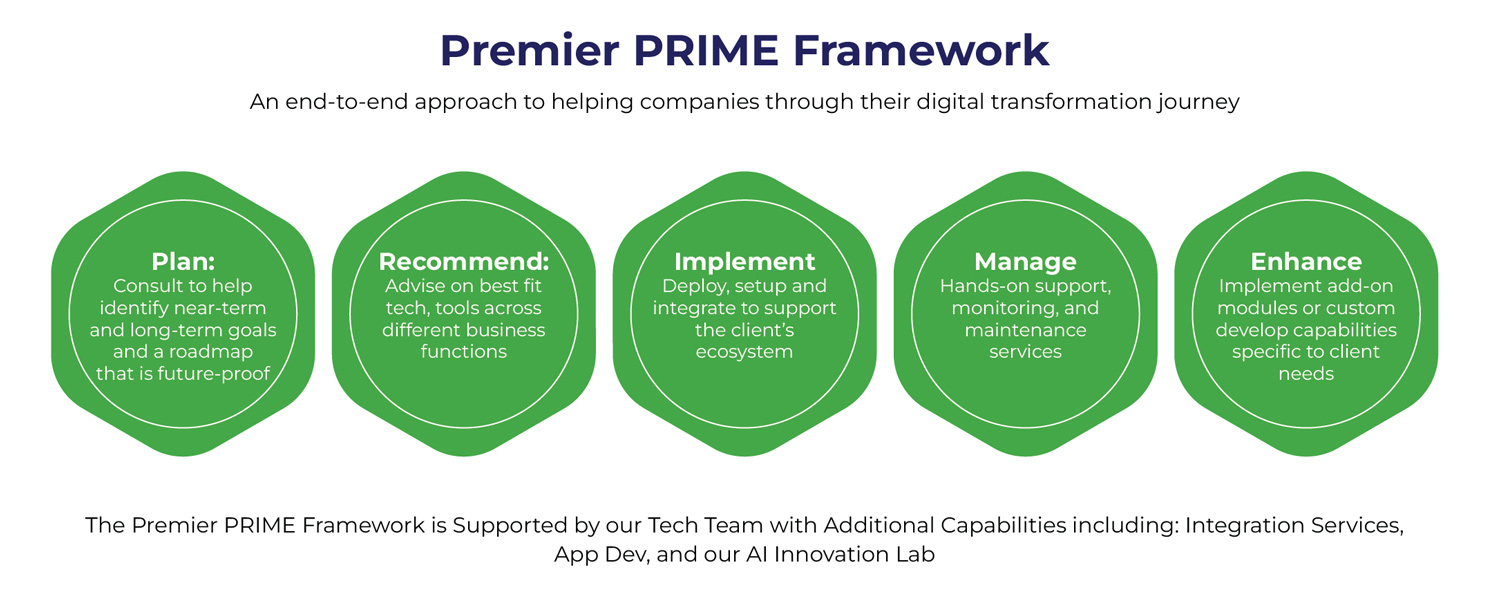

That is where Premier PRIME comes in.

PRIME is Premier’s proprietary, human-in-the-loop delivery framework designed to make transformation clear, measurable, and sustainable from roadmap to results. It helps companies move from “AI experimentation” to AI-enabled operating maturity, where performance improves over time instead of resetting every quarter.

The Premier PRIME Framework: from roadmap to results

PRIME is built around five connected phases:

- Plan: Align priorities to business goals and build a practical, future-ready roadmap

- Recommend: Select best-fit tools, integrations, and workflows based on your operating reality

- Implement: Deploy securely, integrate cleanly, and support adoption

- Manage: Run day-to-day operations with embedded support so momentum does not stall

- Enhance: Optimize continuously with new automations, integrations, and capabilities as needs evolve

Why PRIME matters for AI adoption

The AI rework tax rarely comes from AI itself. It stems from missing structure: unclear standards, inconsistent inputs, fragile workflows, and a lack of an operating rhythm to improve performance.

PRIME reduces that drag by bringing:

- business-aligned workflow design (so AI supports execution, not random output)

- human-in-the-loop guardrails (so quality stays high without slowing teams down)

- operational ownership (so AI becomes a system, not a side tool)

- continuous improvement (so value compounds instead of plateauing)

PRIME is supported by delivery capacity, including integration services, app development, and an AI Innovation Lab, helping mid-market teams implement and evolve without enterprise-sized overhead.

The question for 2026

AI adoption is no longer an advantage. Execution is.

If your teams are spending more time fixing AI than moving faster, that isn’t a tool issue. It’s an operating model issue.

Ready to turn AI investment into measurable ROI in 2026?

Book a call with Premier NX to discuss how PRIME helps mid-market teams reduce rework, build repeatable workflows, and turn AI into sustained execution.